Trust & Verification

Structured vendor and catalog signals reviewed with standardized QA checks.

Reviewer Evidence Log

Added structured trust metadata and standardized validation checkpoints.

Improves explainability and confidence before outbound tool decisions.

Refreshed supporting context to align with current procurement workflow standards.

Reduces decision noise and improves repeatability of buying outcomes.

Explore Related Decision Paths

VS Clusters

Related Profiles

Playbooks & Guides

TL;DR

- Supabase sits in the Database layer where teams usually lose time through fragmented workflows, unclear ownership, and disconnected reporting. A serious evaluation should start by defining decision speed, implementation overhead, and operational risk for the first ninety days. In procurement reviews, teams that extract the most value from Supabase map it against concrete outcomes such as cycle-time reduction, handoff quality between departments, and improved auditability. The tool is generally strongest when the buyer treats onboarding as a process design project instead of a UI preference exercise. Teams with tighter operating cadence can usually see value faster, while slower organizations should phase rollout by business unit and use baseline metrics before migration. That method prevents noisy adoption data and makes renewal decisions cleaner.

- On economics, Supabase should be evaluated beyond surface pricing. The listed tier at Free is only one part of total cost of ownership; the bigger variables are training load, integration maintenance, change-management effort, and support escalation patterns over time. Buyers should model at least two scenarios: a conservative rollout with minimal automation and an optimized rollout with deeper integration depth. In most cases the second scenario has higher setup cost but lower operational friction after quarter one. StackCompare benchmarking also suggests that organizations with formal governance checkpoints outperform ad hoc implementations on both user retention and feature adoption. If you are replacing legacy tools, keep a temporary dual-run period to validate data integrity and preserve historical reporting continuity.

- From a performance and risk standpoint, Supabase currently tracks around 541ms observed response behavior and holds catalog sentiment near 4.9/5 across 500k+. Those numbers are directionally strong, but they should be interpreted alongside your own region footprint, compliance obligations, and incident tolerance. A mature decision sequence includes security review, admin-permissions audit, sandbox validation, and at least one process simulation with real stakeholders. When teams skip simulation, they often misjudge edge cases that surface after launch. The highest-confidence buying path is to run a bounded pilot, define success criteria up front, and convert only after usage behavior proves durable. That creates a defensible renewal baseline and reduces vendor-switch volatility in the next planning cycle.

Supabase in 2026: Procurement and Performance Guide

Supabase sits in the Database layer where teams usually lose time through fragmented workflows, unclear ownership, and disconnected reporting. A serious evaluation should start by defining decision speed, implementation overhead, and operational risk for the first ninety days. In procurement reviews, teams that extract the most value from Supabase map it against concrete outcomes such as cycle-time reduction, handoff quality between departments, and improved auditability. The tool is generally strongest when the buyer treats onboarding as a process design project instead of a UI preference exercise. Teams with tighter operating cadence can usually see value faster, while slower organizations should phase rollout by business unit and use baseline metrics before migration. That method prevents noisy adoption data and makes renewal decisions cleaner.

On economics, Supabase should be evaluated beyond surface pricing. The listed tier at Free is only one part of total cost of ownership; the bigger variables are training load, integration maintenance, change-management effort, and support escalation patterns over time. Buyers should model at least two scenarios: a conservative rollout with minimal automation and an optimized rollout with deeper integration depth. In most cases the second scenario has higher setup cost but lower operational friction after quarter one. StackCompare benchmarking also suggests that organizations with formal governance checkpoints outperform ad hoc implementations on both user retention and feature adoption. If you are replacing legacy tools, keep a temporary dual-run period to validate data integrity and preserve historical reporting continuity.

From a performance and risk standpoint, Supabase currently tracks around 541ms observed response behavior and holds catalog sentiment near 4.9/5 across 500k+. Those numbers are directionally strong, but they should be interpreted alongside your own region footprint, compliance obligations, and incident tolerance. A mature decision sequence includes security review, admin-permissions audit, sandbox validation, and at least one process simulation with real stakeholders. When teams skip simulation, they often misjudge edge cases that surface after launch. The highest-confidence buying path is to run a bounded pilot, define success criteria up front, and convert only after usage behavior proves durable. That creates a defensible renewal baseline and reduces vendor-switch volatility in the next planning cycle.

Performance Analysis

Supabase Pros

- Streamlined user onboarding.

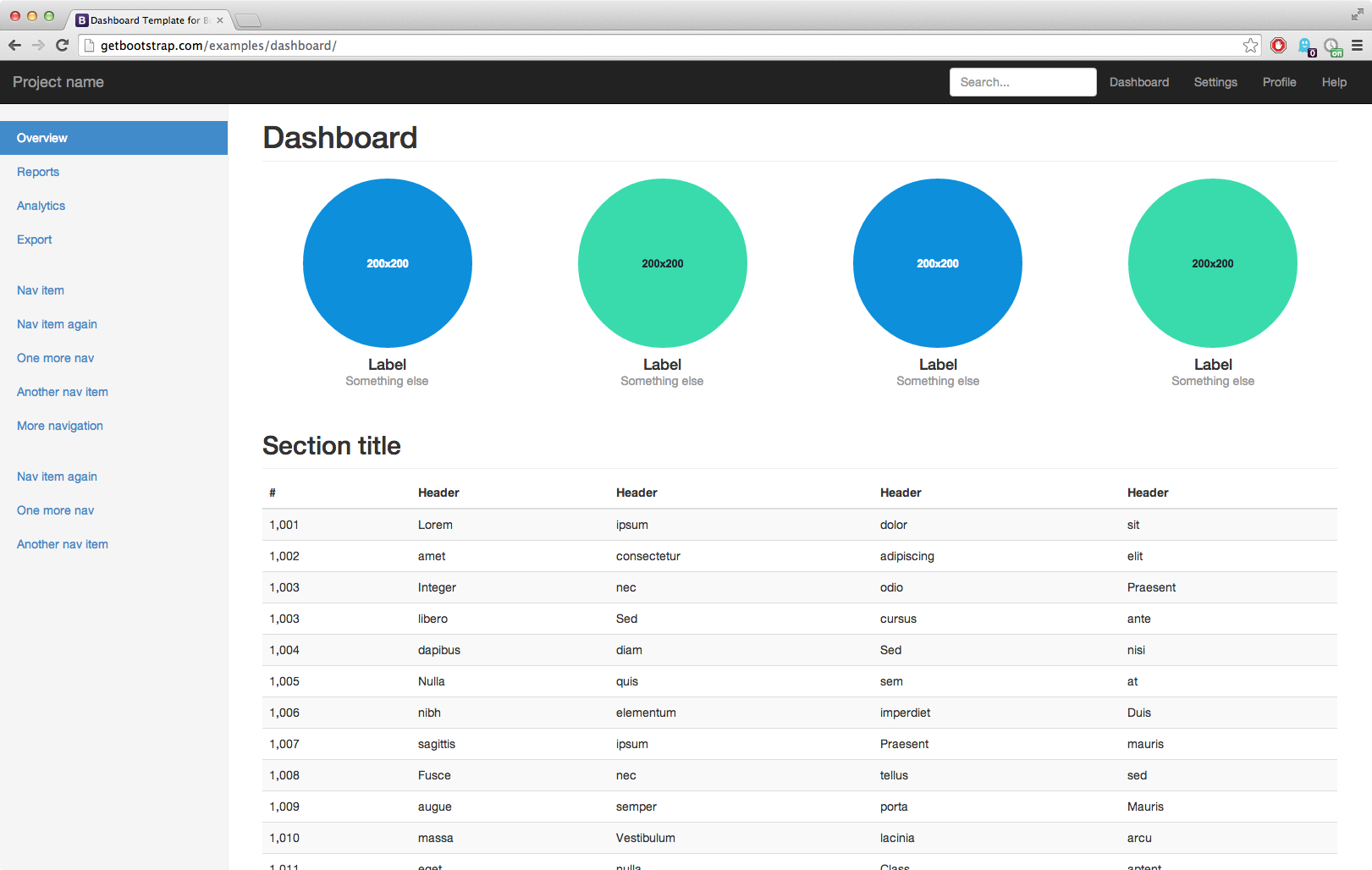

- Highly customizable dashboard.

- Generous free-forever tier.

Supabase Cons

- Advanced features require premium plans.

- Smaller community marketplace.

Team Cost Simulator

Supabase VS Postgres

Don't trust the marketing pages. We fed real API latency and pricing data into our combat engine. See who survives.

View Comparison

Vs. The Field: Competitive Matrix

Final Provisioning Decision

Our audit confirms Supabase is a high-performance choice for Database infrastructure.